Rupin Mohan, HPE

The SCSI protocol has been the bedrock foundation of all storage for more than three decades and it has served (and will continue to serve) customers admirably. SCSI protocol stacks are ubiquitous across all host operating systems, storage arrays, devices, test tools, etc. It’s not hard to understand why: SCSI is a high performance, reliable protocol with a comprehensive error and recovery management mechanisms built in.

Even so, in recent years Flash and SSDs have challenged the performance limits of SCSI as they have eliminated the moving parts: they do not have to rotate media and move disk heads. Hence, what you find is that traditional max I/O queue depth of 32 or 64 outstanding SCSI READ or WRITE commands are now proving to be insufficient, as SSDs are capable of servicing a much higher number of READ or WRITE commands in parallel. In addition, host operating systems manage queues differently, adding more complexity to fine-tuning and potentially increasing performance in the SCSI stack.

To address this, a consortium of industry vendors began work on the development of the Non Volatile Memory Express (NVM Express, or NVMe) protocol. The key benefits of this new protocol is that a storage subsystem or storage stack will be able to issue and service thousands of disk READ or WRITE commands in parallel, with greater scalability than traditional SCSI implementations. The effects are greatly reduced latency as well as dramatically increased IOPs and MB/sec metrics.

Shared Storage with NVMe over Fabrics

The next hurdle facing the storage industry is how to deliver this level of storage performance, given the new metrics, over a storage area network (SAN). While there are a number of pundits forecasting the demise of the SAN, sharing storage over a network has a number of benefits that many enterprise storage customers enjoy and are reluctant to give up. These are:

- More efficient use of storage, which can help avoid “storage islands”

- Offering a full featured, mature storage services like snapshots, backup, replication, Thin-provisioning, de-duplication, encryption, compression, etc.

- Enabling advanced cluster applications

- Multiple levels of disk virtualization and RAID levels

- Offering no single point of failure

- Ease of management with storage consolidation

- The challenge facing the storage industry is to develop a really low-latency SAN that can potentially deliver improved I/O performance.

NVMe over Fabrics is essentially an extension of the Non-Volatile Memory (NVMe) standard, which was originally designed for PCIe-based architectures. However, given that PCIe is a bus architecture, and not well-suited for Fabric architectures, accessing large-scale NVMe devices needed special attention: hence, NVMe over Fabrics (NVMe-oF).

The goal and design of NVMe over Fabrics is straightforward: the key NVMe command structure should be able to be transport agnostic. That is, the ability to communicate NVMe should not be transport-dependent.

As of this writing, there are two standardized methods by which NVMe-oF can be achieved:

- RDMA-based NVMe – This project is working on creating a fabric and related constructs to extend NVMe protocol over a shared Ethernet or InfiniBand network. The same group developing the NVMe PCIe specification is also working on the fabric specification. There are discussions going on in the group around NVMe over TCP/IP and new, emerging network architectures also.

- NVMe over Fibre Channel (FC-NVMe) – New T11 project to define an NVMe over Fibre Channel Protocol mapping NVMe over Fibre Channel is a new T11 project that has engineers from leading storage companies actively working on a standard. Fibre Channel is a transport that has traditionally solved the problem of SCSI over longer distances to enable shared storage. Fibre Channel, in a simple way, transports SCSI READ or WRITE commands, and corresponding data statuses over a network. The T11 group is actively working on enabling the protocol to compatibly transport NVMe READ or WRITE commands over the same FC transport.

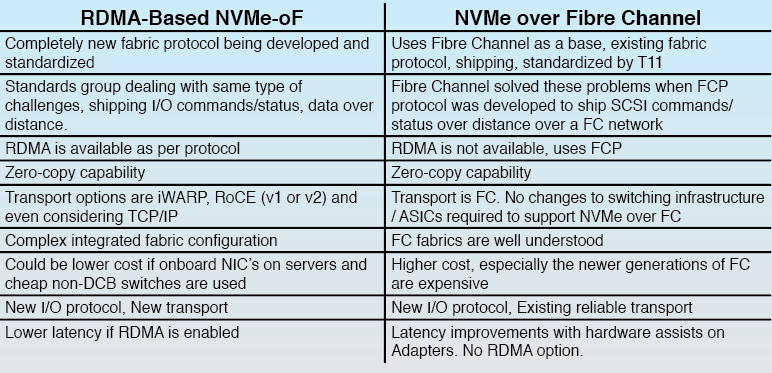

So, how would you compare these two options – here are some thoughts –

The FC protocol provides a solid foundation to extending NVMe over Fabrics as it already accomplished extended SCSI over fabrics almost two decades ago. From a practical technical development perspective, the T11 group is so far ahead in developing FC-NVMe because the Fibre Channel protocol was developed from the beginning with the end-to-end ecosystem in mind. Most mission-critical storage systems run on Fibre Channel today, and NVMe is poised to boost those mission-critical capabilities and requirements even further.

Using an 80/20% example, Fibre channel protocols solve 80% of this FC-NVMe over fabrics problem with existing protocol constructs, and the T11 group has drafted a protocol mapping standard and is actively working on solving the remaining 20% of this problem.

In terms of engineering work completed, the Fibre Channel solution solves more than just the connectivity problems; it’s laser focused on ensuring administrators and end-users of NVMe over Fabrics are guaranteed the level of quality they’ve come to expect from a dominant storage networking protocol for SAN connectivity.

Conclusion

NVMe will increase storage performance by orders of magnitude as the protocol and products come to life and mature with product lifecycles.

There is more to Data Center storage solutions than speeds and connectivity. There is reliability, high-availability, and end-to-end resiliency. There is the assurance that all the pieces of the puzzle will fit together, the solution can be qualified, and customers can be confident that adopting a new technology such as NVMe can come with some well-understood, battle-hardened, rock-solid technology.

With more than 20 years of a time-tested, tried-and-true track record, there is no better bet than Fibre Channel.